Alexa good, Your IVR bad

Natural Language Understanding (NLU) has made monumental leaps over the past few years. Kicking off the rapid adoption by mainstream customers was 'voice (as a) user interface' (VUI) with the release of Apple's Siri and Google's Assistant in 2011 and 2012. Amazon introduced smart speakers to the world with the Alexa powered Echo in 2015, followed by Google Home in 2016. As of the end of 2018 (according to a TechCrunch report), 41% of Americans have a smart speaker in their home.

Customers LOVE VUI, as evidenced by their purchases but they still HATE your Speech IVR.

Why do consumers love Alexa but hate your IVR?

3 Key Reasons: Natural Language Understanding, Relevance & Depth.

Natural Language Understanding:

NLU is a big topic, for now, we'll focus on a key difference in how Siri/Alexa/Google recognizes speech utterances vs how your current contact center IVR recognizes a speech utterance.

When speaking naturally from person to person or when speaking with our favorite smart speaker, we include multiple pieces of information in a single sentence. For example, in this sentence; "let’s grab coffee tomorrow at Blue Bottle around noon", there are 4 key pieces of information

- action => "grab coffee”

- location => "Blue Bottle"

- date => "tomorrow"

- time => "noon"

Your current IVR would need 4 separate questions to get that simple information down. Siri/Alexa/Google, however, each utilize a method of NLU called 'slot filling' to improve understanding.

Slot filling takes a complete sentence, parses the information into groups known as 'slots' and fills the data to the relevant slot (similar to filling out a form). It allows applications to understand many data inputs at once, leading to a much more natural conversation. If a follow-up question needs to be asked, such as if ‘time’ was a required slot but the sentence didn't include the time, then 3 of the 4 slots would be complete and the follow-up question would ask only about the missing slot.

Relevance:

Relevance in this context means providing information which is up to date and tailored specifically to the individual customer, not generic, dated and generally unhelpful information.

Call center Agent scripts to change often - monthly, weekly, or even daily updates can be made to scripting. These changes often come from marketing, product, or come from a security & compliance perspective. Legacy IVR’s, however, are often implemented as big one-time waterfall projects, which once complete are often ignored and left to become outdated and hated. This results in IVR performance declining over time as the IVR stays fixed and customer needs change.

When we ask Siri/Alexa/Google to perform a task, i.e. "Hey Google, is my flight on time?", it doesn't have to ask a bunch of follow up questions. These services are so deeply integrated with your other applications that without any follow up questions it can identify the user (ie using 'voice match' for multiple users in a home on google), check your email or calendar to find flight number & date then query the airline for current status and playback an answer.

An amazing IVR should be integrated with all the customer touch points of your business to provide relevance. Questions such as 'What did this customer call about last time', 'What is the current billing/account/order status of this customer', 'What did this customer search the company website/social site for recently', can all be incorporated into IVR via APIs.

Depth:

As of now, Alexa has 70,000 skills. Think of a ‘skill’ for a smart speaker as the available abilities. For example, the ability to check the weather, add a calendar event, or play music from Pandora are each skill. Historically, in the contact center world, we have referred to these skills as 'call flows'. Smart speakers that customers love have a tremendous and growing depth of skills.

How many skills can your IVR perform? Two, four, or maybe six? Are customers actually taking advantage of these skills or are they getting in then hitting zero repeatedly to get out, and are they relevant to the actual needs of your customers? IVR’s can be one of the best, revenue generating and/or cost saving levers in your contact center, but if left stagnant they will become outdated and hated.

What now:

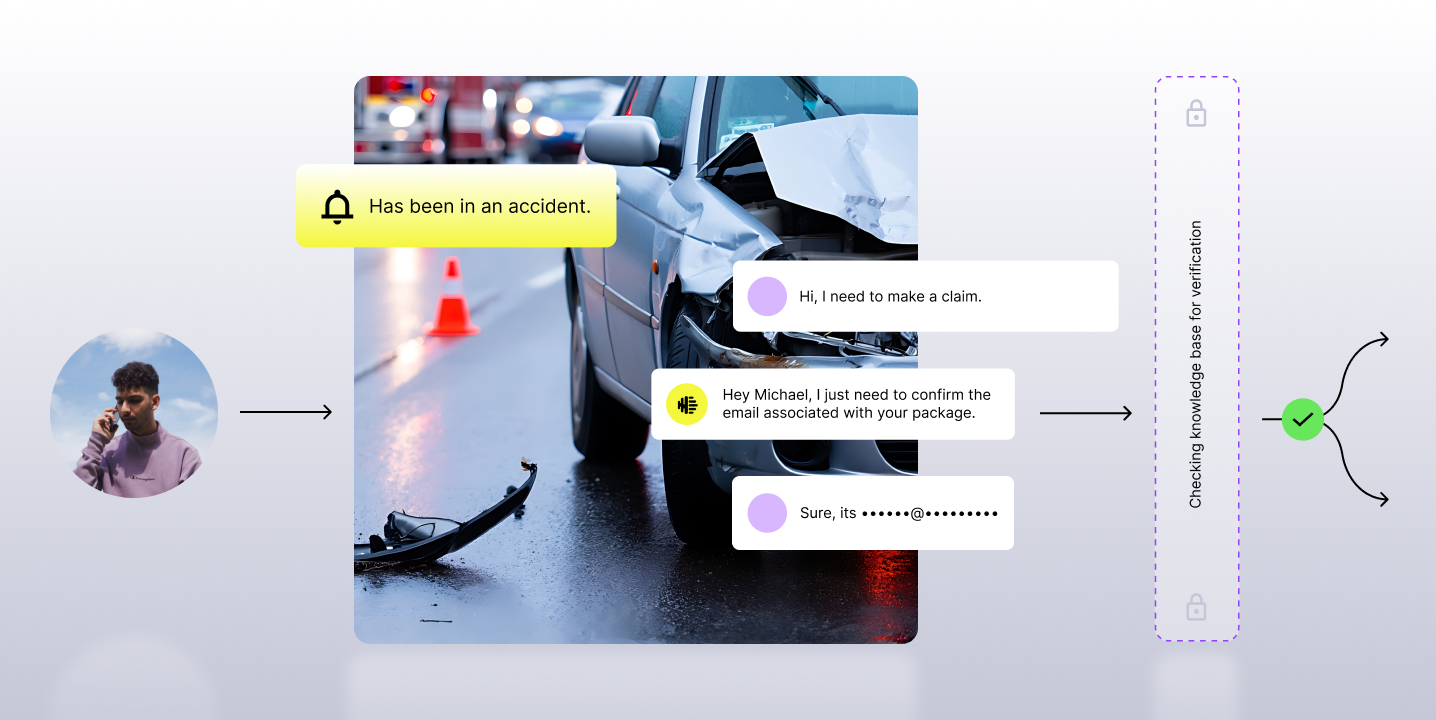

Observe.AI provides a Cloud Voice AI platform for call centers which uses deep learning and natural language processing (NLP) to unlock and pull deep insights from Call Center conversations. This allows us to automate Compliance Verification, Keyword Spotting, Sentiment Analysis, and Quality Analysis, and more.

With Observe.AI’s powerful platform we gain insights into contact center calls, letting us see why customers are calling and what agent responses are leading to the best customer outcomes. This deep voice learning is fed directly into our AI voice bots (think modern smart IVR) which continuously learns, grows and adapts to changing customer needs. Learn more about how Observe.AI's AI improves upon traditional IVR systems with AI Voice Agents.

About the Author: Christian Wathne leads the Solutions vertical at Observe.AI and has years of experience in the technology side of Voice IVRs.

Subscribe to our newsletter.

.png)

.png)

.png)

.png)